La tecnología avanza de una forma impresionante, lo que hoy es lo más avanzado e innovador en unos años se convierte en obsoleto, y ya no digamos en unos años, en unos cuantos meses para el caso de algunos dispositivos. Al estar dentro de estos temas todos los días, tal vez podemos olvidar cómo ha sido esta evolución, o bien, perder la capacidad de asombro, por ello las cosas que hablan del futuro suelen entusiasmarnos, aunque se trate de prototipos o ideas, ya que nos hace imaginar cómo será la vida dentro de algunos años.

Pero estas predicciones no son algo exclusivo de estos días, es más, ahora se ha dejado un poco esto, ya que hace varios años era una práctica común donde se solía imaginar con máquinas, robots, hologramas… vamos, con tecnológica que llegaría a facilitar la vida de los seres humanos. Todo esto tuvo su explosión a inicios del siglo XX, donde la mira estaba puesta en el siguiente siglo y donde se suponía que llegaría el cambio más radical de todos.

Esto se volvió una especie de género por sí mismo, algo que se le conoce como Paleofuturo. De aquí surgieron columnas en diarios, revistas y publicaciones especializadas, donde todas se dedicaban a predecir cómo sería nuestra vida en el futuro gracias a la tecnología. Y ahora, hemos decidido viajar en el tiempo y hacer un repaso por esas magnificas publicaciones, viendo cómo se suponía que deberíamos vivir hoy día.

1899 – El sueño de volar

A finales del siglo XIX, el artista francés Jean-Marc Côté imaginaba cómo sería el año 2000, esto a través de una serie de postales que se pueden encontrar en la Biblioteca Nacional de Francia. En ellas Côté plasmaba una visión futurista donde el hombre podía volar, era capaz de tocar varios instrumentos a la vez, y la crianza de los animales y las granjas eran operadas por máquinas.

1990 – No habrá coches en las grandes ciudades

Eso es lo que proponía John Elfreth Watkings en un artículo publicado en ‘The Ladie’s Home Journal’, que se publicó entre 1889 y 1907. El escritorio afirmaba que no habría coches en las grandes ciudades porque, sino que solo circularían por túneles, bien iluminados y ventilados, o por encima de las ciudades. La realidad es otra: en lo que va de año se han fabricado más de 10,5 millones de coches.

1990 – Trenes a 241 km/h

En el mismo artículo, John Elfreth Watkings afirmaba que en el año 2.000 ya habría trenes capaces de moverse a 241 km/h. Bueno, no exactamente en el año 2.000, pero China ya tiene operativos los primeros trenes bala capaces de circular por las vías de forma autónoma a 350 km/h. Si en la predicción anterior pecaba de optimista, en esta ocasión se podría decir que se ha quedado corto.

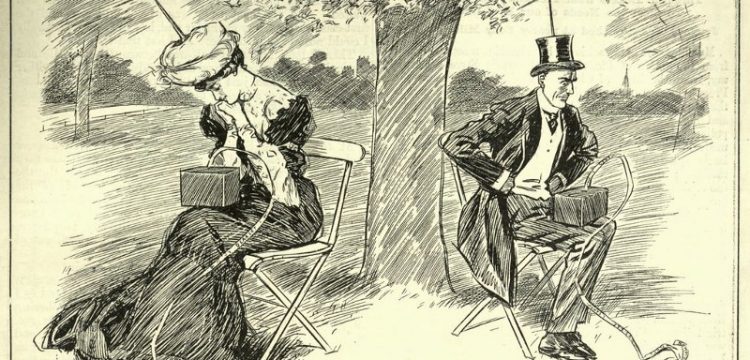

1910 – Cine por correspondencia

Siguiendo con las postales francesas, para 1910 se empezaron a popularizar ya que era una forma divertida de imaginar el futuro en el año 2000, se seguían mostrado importantes avances, como maquinas automáticas en peluquerías, policías voladores (porque alguien tenía que controlar el tráfico aéreo), pero sin duda la más interesante es que la que tenemos encima de esta líneas, que nos presenta un sistema de cine por medio de correspondencia y sí, es a color y con audio integrado.

1910 – Ciudades techadas

Otra postal de lo más peculiar es la que predice una ciudad completamente techada para evitar las lluvias, tormentas, etc. La postal deja ver una plaza con una placa colocada justo encima en la que, aparentemente, hay una especie de cristales que dejarían pasar la luz del sol. No parece demasiado práctico, desde luego.

1910 – Nuestro propio avión personal

En los últimos meses hemos asistido al lanzamiento de diferentes prototipos de vehículos aéreos personales, aunque a estos proyectos aún le queda camino por recorrer. Pero ya por 1910, se intuía que la movilidad iría por estos derroteros. No con un avión, desde luego, sino con un zeppelin personal en el que cabrían tres personas.

1910 – Carteros voladores

Las postales han dado mucho de sí. En 1910, se pensaba que en el año 2.000 los carteros nos entregarían las cartas montado en una especie de montura voladora personal que recuerda irremediablemente al avión de los Hermanos Wright. La realidad es que, hoy en día, las cartas en papel han quedado relegadas a un segundo plano, aunque ya estamos empezando a ver los primeros drones autónomos repartiendo paquetes.

1910 – Chimeneas de radio

A todos nos gusta estar calentitos en invierno y disfrutar de una chimenea que no deja de chispear, pero ya por 1910, la gente pensaba que usaríamos chimeneas de radio. Como idea tiene sus flecos, no por nada, sino porque es un millón de veces más radioactivo que el uranio.

1912 – Video chats

A pesar de que la primera demostración de una videollamada no llegó hasta 1964 gracias a AT&T, eso no fue un obstáculo para que en 1911, Hugo Gernsback, editor de la revista ‘Modern Electrics’, imaginará un dispositivo bautizado como “telephot”, que permitiría realizar llamadas de larga distancia viendo a nuestro interlocutor por medio de una pantalla. Esta edición, a pesar de haberse escrito en noviembre de 1911, fue publicada hasta el mes de febrero de 1912.

1916 – El tanque de guerra eléctrico

En plena Primera Guerra Mundial, las publicaciones se dedicaban a predecir cómo serían las armas del futuro. En este ejemplo, tenemos la portada de la revista The Electrical Experimenter, que muestra un tanque que usa un sistema giratorio para moverse, pero lo más importante es que era eléctrico, para así no depender de combustibles y puedan sufrir accidentes por explosiones.

1932 – Robots y casas inteligentes

Esta imagen publicada en diciembre de 1932 en el diario San Antonio Light, muestra como una persona desde la cama puede controlar varios aspectos de su vida, desde ver a su esposa haciendo las compras, hasta un robot mayordomo que le acerca la ropa del día.

1943 – El videotélefono

La compañía canadiense Seagram Company mostraba en un anuncio publicitario, como hacían negocios globales, para ello presentaban el uso del videotélefono, del que nunca hubo constancia de que lo usaran, pero sin embargo era muy atractivo como ciertas empresas usaban la publicidad, mostrando tecnología del futuro para dar un aspecto de vanguardia.

1945 – Conexiones inalámbricas

En 1945 la señal de televisión aún no era usada para transmisiones comerciales, sólo algunos países y algunas cadenas, como la BBC, tenían ese privilegio. Reinaba el uso de la radio y las comunicaciones estaban limitadas, a pesar de esto, la revista Wireless World ya imaginaba un mundo lleno de satélites, que serian capaces de comunicarnos de forma intercontinental y con transmisiones en directo a todo el mundo desde un punto del planeta.

1950 – El abuelo del smartphone ¿o smartwatch?

A inicios de la década de 1950, la revista Mechanix Illustrated mostraba un prototipo creado en Estados Unidos de un teléfono móvil con pantalla, sí, la obsesión por ver con quien hablábamos estuvo presente por muchos años. Apodado simplemente «el teléfono del futuro» presentaba un diseño circular, por un lado teníamos botones físicos para marcar, mientras que por el otro una pantalla a todo color, además de que se podía operar por medio de la voz y hasta contaba con antena para recibir la señal de televisión.

1953 – Las ciudades del futuro

Estas imágenes son increíbles (y favoritas personales), en ellas podemos ver una visión de cómo sería la vida en el siglo XXI. La vida en suburbios sería en vecindarios llenos de domos, la casas dejarían de existir y tendríamos nuestro propio ecosistema, donde podríamos escoger la temperatura y forma de vivir. Pero la portada de la edición especial de ‘Future Cities’ es memorable, en ella imaginan colonias en el espacio, casas con paneles solares, televisiones en una pulsera, vamos, unos verdaderos visionarios.

1957 – Llamadas frente a frente

Ya lo he comentado, en el pasado existía una extraña fijación por las videollamadas y en este anuncio de marzo de 1957, publicado en la revista Scientific American, la compañía Hughes, famosa hoy en día por el desarrollo de satélites de banda ancha, anunciaba su nuevo dispositivo Tonotron, con el que, según la publicidad, podríamos hacer llamadas como si estuviéramos viendo la televisión. Finalmente el producto no fue lanzado debido a sus altos costes, además de que se necesitaba una compleja infraestructura.

1958 – El coche impulsando por energía solar

En la época dorada de la industria americana del motor, Chrysler, por medio de su vicepresidente James C. Zeder, lanzaba una importante predicción de cómo serian los coches en el futuro. Éstos estarían impulsados por un sistema solar que abastecería una batería por medio de convertidores de silicon. El Sunray Sedan de Chrysler, del que sólo existía un boceto, sería la apuesta del fabricante, quien aseguraba que ya estaban trabando en él y vería la luz en los siguientes años.

1958 – Educación a distancia

Arthur Radebaugh, famoso ilustrador futurista, publicaba un domingo de 1958 su visión de cómo serían las aulas del futuro. Los profesores ya no deberían preocuparse por asistir a los colegios, podrían dar clases a varios grupos al mismo tiempo desde una ubicación remota, cada estudiante tendría a su alcance un dispositivo con cámara, pantalla y teclado para poder participar y hacer preguntas. Todo esto surgió gracias a una entrevista con el Dr. Simon Ramo, catedrático del Instituto de Tecnología de California.

1959 – La casa solar

La idea de las casas que hicieran uso de energía solar fueron muy populares en la segunda mitad del siglo XX. Esta ilustración que apareció en el diario Toronto Star Weekly, mostraba como funcionarían las casas por medio de energía solar, pero ojo, la energía solar sólo servía como calefacción en épocas de frío.

1963 – Gadgets conectados al televisor

El televisor era el dispositivo por excelencia, así que muchos de los pronósticos iban en torno a él. En esta edición de la revista Popular Science, se veía cómo existirían dispositivos externos que se conectarían al televisor para así interactuar con lo que estaba en pantalla. Vamos, una especie de antecedente a las consolas de videojuegos.

1968 – El teléfono ya tenía televisión y videollamadas (otra vez)

La compañía Western Electric desarrollaba junto a Bell Telephone Laboratories el «Picturephone set», un dispositivo que se conectaba a teléfonos (Bell únicamente) y permitía ver la televisión por medio de un mando alámbrico, pero su innovación radicaba en que el monitor (en blanco y negro) tenía una cámara para poder hacer videollamadas. Su precio era muy elevado para la época y sólo alcanzaron a vender la primera línea de producción, además de que nunca especificaron que se necesitaban dos dispositivos para hacer videollamadas, por lo que recibieron una buena cantidad de demandas, sí, la gente llegó a pensar que con un sólo dispositivo podrían ver a la otra persona.

1965 y 1970 – El televisor

La revista Popular Science, un referente en esa época, apostaba por importantes avances en el campo de los televisores. En 1965 aseguraban que éstos disminuirían su tamaño de forma considerable para volverse portátiles, mientras que para 1970, pronosticaban una explosión de sistemas multipantalla, lo que nos permitiría «ver» varios programas al mismo tiempo.

1879 – La primera predicción de internet

Edward Page Mitchell escribió en 1879 ‘The Senator’s Daughter‘, un relato que se desarrollaba en 1937, donde precisamente hablaba de cómo la hija del senador imaginaba el futuro de la política mundial. En este cuento se habla de de una tecnología fantástica que permite leer noticias desde cualquier parte del mundo, la cual se basa en un tira de papel impreso infinitiva que proporciona todo tipo de noticias sobre eventos que ocurren en tiempo real, una especie de Twitter o red social con conexión permanente.

1984 – Ciudades en domos

En plena Guerra Fría, The Billings Gazette, una publicación de Montana, Estados Unidos, publicó predicciones de cómo sería el futuro cuando llegáramos al año 2020. En ellas se destaca la idea de vivir en ciudades cubiertas por domos, las cuales cuentan con su propio ecosistema y no se preocupan por las condiciones meteorológicas externas. Una idea más bien motivada por el supuesto peligro de una guerra nuclear.

1960 – Hospitales voladores

La atención médica urgente era un problema en Estados Unidos hacia finales de la década de 1950. Es así como una tira semanal de nombre ‘Closer Than We Think‘ imaginó la llegada de hospitales voladores, los cuales serían capaces de brindar atención médica en áreas remotas. Una idea que hoy día no suena tan descabellada al ver por ejemplo el reciente proyecto de UNICEF.

1904 – Máquinas para volar

En 1904, el periódico Minneapolis Journal estrenó una sección que se llamaba ‘Journal Junior’, la cual se dedicaba a mostrar predicciones de lo que ocurriría en 1919 y 2019, la mayoría de ellas creadas por niños y adolescentes. La primera de ellas fue imaginar que para 1919 todos podríamos volar por medio de una máquina personal, la cual nos permitiría viajar a cualquier parte de una manera sencilla. Dicha idea surgía tras el primer vuelo exitoso de los hermanaos Wright de 1903.

1904 – Escoba eléctrica

Hoy día tenemos robots que aspiran y friegan nuestras casas, pero en 1904 creían que antes de que terminara el siglo verían escobas eléctricas y casi automáticas, las cuales aún dependerían de una persona que les diera dirección y les indicara donde tenían que limpiar.

1968 – Ciudades submarinas

El libro ‘Explorers of the Deep: Man’s Future Beneath the Sea‘ de 1968, exploraba las posibilidades de que el ser humano viviera en los océanos tras crear edificaciones y estructuras que nos permitirían vivir bajo el agua. Nuevamente se trata de una idea impulsada por el temor a la guerra y el incremento en los indices contaminantes que empezaban a estar presentes a finales de la década de 1960.

1958 – Patrullas voladoras

La idea de los coches voladores ha estado presente en nuestra vida desde hace varias décadas atrás, y en 1958 la revista Modern Mechanix ofrecía las primeras pinceladas de cómo se imaginaban el futuro de la seguridad a través de policías a bordo de patrullas voladoras. Viendo bien la imagen, no estaban tan lejos de lo que hoy día se ha planteado en este campo, donde se tiene un aspecto de drones gigante más que de coche volador.

1945 – La casa del futuro sería un todo-en-uno

Este vídeo es una joya, en él podemos ver propagada militar estadounidense en la que se muestran cómo serían las casas del futuro, estructuras prediseñadas con todo incluido, las cuales se podrían mover de un sitio a otro e incluso contarían con waflera y hasta máquina para asar pollos. Eso sí, en el vídeo advierten que las casas estaban en etapa experimental, por lo que el diseño y prestaciones finales podría ser distinto al mostrado.

1939 – Televisión personal

Sí, parece un casco de realidad virtual, pero en realidad se trata de algo que bautizaron como ‘monóculo de la televisión’, el cual permitiría ver la televisión de forma personal y sin interrupciones. Se trata de un concepto que nace en Inglaterra por parte de la compañía Gramophone Co., y que sería retomado por la revista Radio-Craft en 1939. Este dispositivo permitiría ver una imagen de 1,5 x 1 pulgada gracias a dos espejos colocados en su interior a 45 grados, los cuales proyectarían la imagen proveniente de un televisor, ya que este dispositivo no sería inalámbrico.

1958 – Taxis con capacidades enormes

Otra vez una divertida predicción de ‘Closer Than We Think’, que en 1958 imaginó como serían los taxis del futuro. La idea nos mostraba un vehículo con capacidad para más de 20 pasajeros, mascotas incluidas, donde además se incluirían televisores y lujo. En este caso, el conductor estaría en una especie de cabina independiente en la parte superior.

1987 – Vacaciones en la Luna para 2018

El sociólogo Daniel Bell escribió en 1987 un interesante artículo que hacía pronósticos de lo que veríamos en 2018. A pesar de que acertó en algunos temas, como la nanotecnología y el crecimiento de la población, Bell aseguraba que habría viajes espaciales que nos permitirían pasar unas vacaciones en la Luna o en la Estación Espacial Internacional. Algo que evidentemente aún no se cumple, aunque parece que no estamos muy lejos.

Fuente: https://www.xataka.com/historia-tecnologica/16-ejemplos-de-como-se-veia-el-futuro-en-el-pasado / Actualizado en marzo de 2019 con más predicciones futuristas del pasad

Imagen |

Imagen |